It supports 100+ data sources ( including 30+ free data sources) and is a 3-step process by just selecting the data source, providing valid credentials, and choosing the destination. Hevo Data, a No-code Data Pipeline helps to load data from any data source such as Databases, SaaS applications, Cloud Storage, SDK,s, and Streaming Services and simplifies the ETL process. To know more about Apache Airflow, click here. Graphical UI: Airflow comes with a web application to monitor the real-time status of running tasks and DAGs.Open Source: Airflow is free to use and has a large community of active users that makes it easier for developers to availability of resources.Airflow uses standard python language for creating DAGs and integrating with other platforms for deployment. Supports Python: Python is easy to learn and code that is widely used in the industry for developing modern applications.Easy Integrations: Airflow comes with any operators that allow users to easily integrate it with many applications and cloud platforms such as Google, AWS, Azure, etc, for developing scalable applications.Some of the main features of Apache Airflow are given below. There are different types of Airflow Sensors and they all perform different tasks. This means that they are good at making DAGs more event-driven.

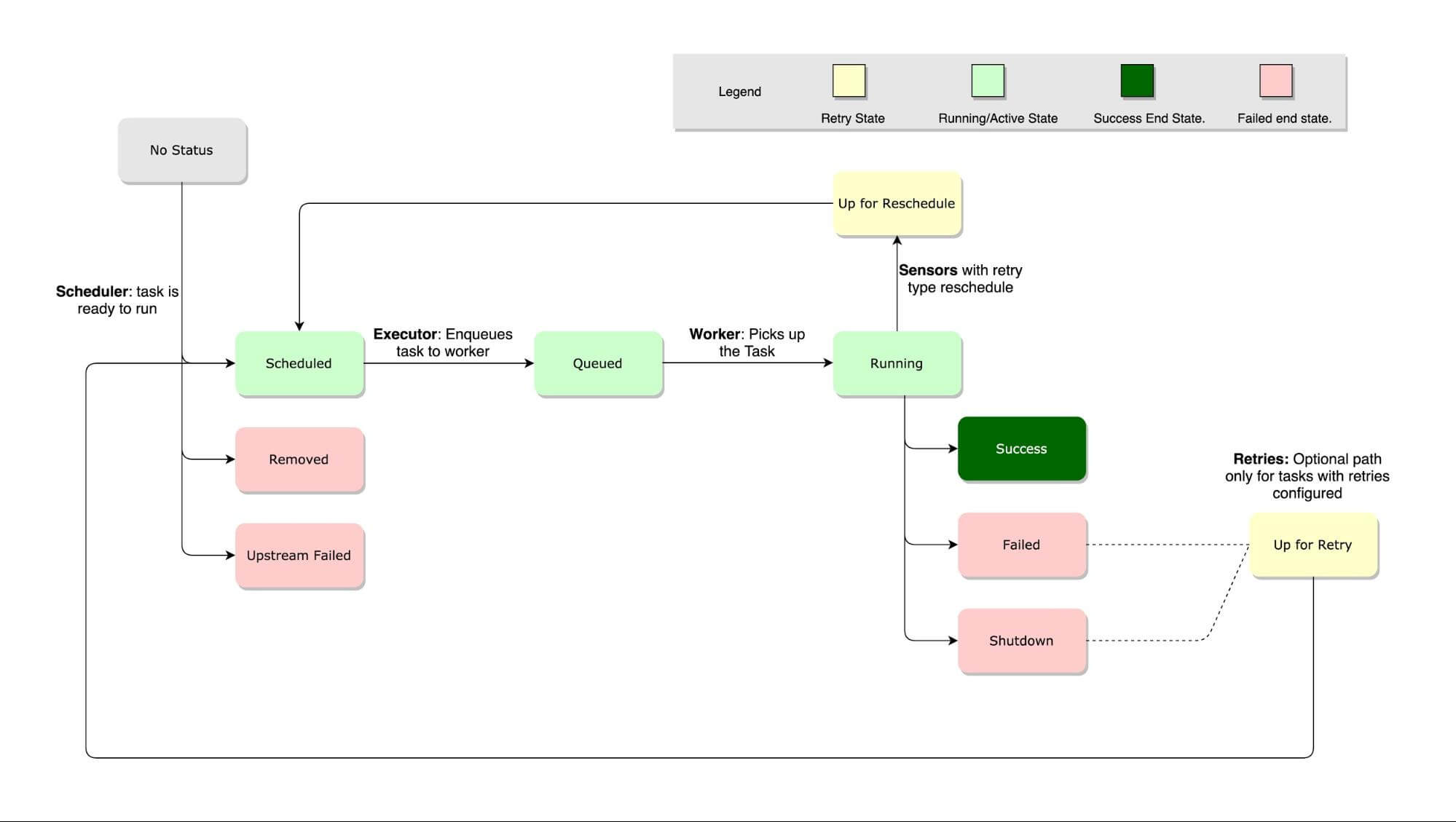

They check for the occurrence of particular conditions before they can execute tasks in the DAGs. This makes it easy to execute tasks in the correct order and allocate the right resources to them.Īirflow Sensors are very important to DAGs. With Airflow, you can author your workflows in the form of directed acyclic graphs (DAGs). Thus, Airflow makes it easy to manage workflows. When users define their workflows in the form of code, they become more versionable, maintainable, testable, and collaborative.

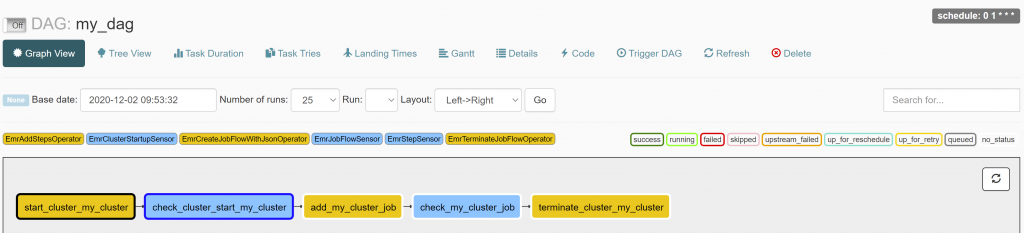

It helps its users to author, schedule, and monitor their workflows programmatically. Apache Airflow installed on your local machine.Īpache Airflow is a popular platform for workflow management.In this article, you will learn about Airflow S3KeySensor, understand how to define it with the help of its syntax, and implement S3KeySensor using a simple Python code. The S3KeySensor checks for the key in the S3 bucket at regular intervals and performs defined tasks. Airflow uses its special operators such as S3KeySensor to manage and configure these events. Airflow allows Developers to handle workflows and execute certain events until a defined condition is met. Apache Airflow is a workflow management platform that allows companies to programmatically stage their Data Pipeline tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed